We have observed various failure modes, such as repetitive text, world modeling failures (e.g. the model sometimes writes about fires happening under water), and unnatural topic switching.

– Introductory paper about GPT-2 [gpt2]

balls have zero to me to me to me to me to me to me to me to me to

– A conversation of Facebook bots to each other [fbots17]

Veni, Vidi, Vici. “I came, I saw, I conquered” – with the famous phrase, Caesar teaches us his particular grammar: a grammar that has only one kind of subject (the speaking Caesar himself), needs no object, allows no transitivity – as anything specific would be merely a limitation. It is the grammar of the Roman empire, recognising no borders and interested in everything, claiming universality by making even the first-person subject implicit (as it is in the latin language).

What does it mean to speak? Let’s start with what we want to leave behind. Computer communication is the culmination of the ancient theoretical tradition of symmetric communication, a tradition that assumes that the interlocutors always already share their grammar, their structures, and only exchange a particular use of them. In this tradition, a speaker, Alice (A) encodes a message according to some rules, speaks out, then Bob (B) decodes it. “I ate an apple”, encodes Alice, for Bob to decode: “The acting one was the same as the speaker – hence Alice, and her act was one of eating, it happened in the past, and the passive object of her eating was an apple”.

Yet Caesar aims at something different. It’s not just “The acting one was me this time, and the acts were such and such”. No: the anaphoric, insistently repetitive structure reads rather: “I am the only acting one, and there’s no thing but my acts”. To be sure, his sentences are almost adhering to the normal latin grammar (if we condone “to see” and “to conquer” having no object) – hence they are legible. He’s not proposing any new signs either. And yet his act of speech institutes a new, unique grammar.

On the one hand, this grammar immediately receives our consent. We’re caught unawares by it, we didn’t know one can just say something like this, even if it’s almost perfectly grammatical in latin. The sentence is in past tense, and it is assumed to refer to a particular past event, thus we accept there’s a “truth” to it. Of course, who of us remembers that particular battle that is the referent? Caesar took that event and raised it into a founding myth of the simple and powerful grammar, at the same time personal and universal.

And on the other hand, it’s not a grammar for us to use. Wikipedia cites a couple of examples: "We came, we saw, God conquered"; or Hillary Clinton’s one: "We came, we saw, he died" (it was Gaddafi), both flinching and losing its sole subject at the last possible moment. This is Caesar’s grammar and we consent to understand it, but nobody can use it in the same way as him – by assuming that it’s spoken of the “World”, a notion that Caesar’s grammar doesn’t need because it speaks of nothing else.

Grammar. Everyone believes in grammar, this ancientest god, and even when breaking its rules one clings to a little ritual – deepens one’s voice, makes a weird face, and/or calls it “poetry”. This god works like this: everything I ever heard, including from myself – gets compressed into a bunch of rules I assume are valid, that I assume are god-given – and produces more things for me to say. This belief in grammar god’s rules that I discover myself allows me to synthesise new things from the mess of words my memory might hold.

The way linguists split grammar – “subject verb object” and such – is just a particular case. A notion of “formal grammar” generalizes this idea to anything with articulated structure. A formal grammar of a tree, for example, can be developed – “A branch can have another branch, or a leaf, or a bud, or a flower”. Algorithmic botany[plant18] is a field of gardening that lets one grow so many things like this:

Prusinkiewicz et al. Modeling plant development with L-systems. 2018. [plant18]

These formal grammars can be recognised back into our daily speech. Grammars of small-talk, of baby-talk, or apologising, are not well described by the “S-V-O” rule, but rather by much simpler rules that allow for great variability. Learning those rules doesn’t expand one’s ability to express more things, but allows to continue talking longer and longer, which is much more important in those cases. The only thing required for a small-talk is to be long and changeable, thus soothing, until the interlocutors feel safe enough to move on in one way or another.

If there’s no such thing as common grammar, if the only reason we understand each other is because we remember common speech-examples, but not idiosyncratic rules each of us gathers from them - then these particular rules are revealed when we speak. To say something is to hint at the rules one has in mind: to reveal, for example, that one never learned the grammar needed to speak of one’s choices as unfree, or that one has no other grammar of critique than producing accusations ending with the suffix “-ism”. Grammar is not the presupposition of understanding; on the contrary, grammar is something utopic in the other’s words that has the power of demand to be accepted as the universal rule.

Generative grammar. A wave of Twitter-bots crushed twitter in 2010’s, as a somewhat different kind of poetic creation. Unlike the previous generative poetry, a distinctive feature of twitter bots is that the pieces are constantly, often hourly, automatically produced and published, thus rather than emphasising a particular generated text, the very stream of text-generation is presented as the main poetic work. A reader is invited not to over-value a particular generated tweet, but appreciate the untiring machinic speech as a potentially endless, regular process. This process is of pure production, of mechanic repetition of writing, it doesn’t change, evolve nor ‘individuate’ - except perhaps in the minds of the readers, whose endless attempts to understand bots and inscribe them in their discourse, by retweeting tweets they like or find oddly topical, quoting them, interpreting them, replying to them perhaps create a particular bot-personality that only exists in the social imaginary.

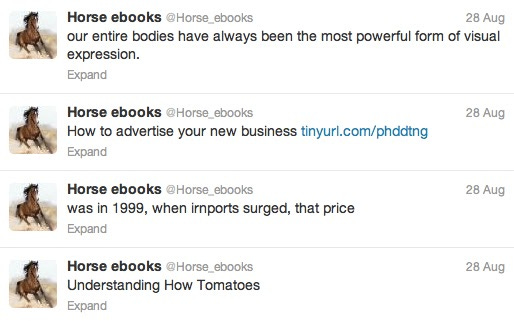

The first fascinating of the bots was @Horse_ebooks[horse13], an account that left everyone puzzling around 2012. Too weird for an automation, too rare for a human - @Horse_ebooks was defying theories with its innocence, theories that would constantly misrecognize behind his tweets particular kinds of grammar - of spam, of russian intervention, of mystic brilliance, of artistry, of stupidity - grammars that would promise to compress its output into a clear machinic source but never quite succeeded at that. The uncanny valley impact of @Horse_ebooks (well-documented by major online publications) is probably hard to understand now, eight years later, when this kind of streams of phrases naturalised themselves as the most normal thing possible:

In a fascinating essay that somehow came through Kodwo Eshun [axsys2018], a history of a twitter bot called @_geopoetics is told. The creolisation mentioned there as the operation of the bot can be explained as a forced merger of two grammars that don’t simply combine in a conjunction, but engenders a new one (typically, it’s the child that speaks a true creole). It is a constant, insistent act of speech using a new grammar that institutes this grammar as a fact, as another law of logos. Reading, hearing this speech always already recognises its claims to existence.

Hacking. “Guns don’t kill people, people do” is a hack at the level of grammar, not the referent. It refers to nothing but it abuses grammar in a particular way, a way that is very hard to undo. To negate this sentence it’s not enough to just negate its parts, as the statement declares a grammatical rule (“people is the subject”) rather than just expresses something using an already agreed-upon grammar. Hence every attempt to fight it has to go through a supposed meaning of it, that already presupposes too much (it has to presuppose what being a grammatical subject means in the world, which is nothing) - so a true counterargument to this sentence is a text that can never ever stop correcting itself, qualifying itself, as an endless conjunction of “but... but…”, a theoretic stammer inducing which is exactly the aim of the political right-wing language-hacker.

In Frost’s famous, cinematographed [howard08] interview Nixon uses the political grammar of plausible deniability, that allows for endless evasion, allows him to give nothing to every demand. In an incredible discursive plot-twist, Frost forces “the american people” back into the speech [hook13], a concept that just can’t find the right place in that grammar, thus immediately shifting Nixon into a very different grammar: one of remorse. (“The american people” are the intended passive audience of the speech of plausible deniability, in this grammar they can neither be the object of any political mishaps (that would raise the level of crime too much), nor a subject that would thus receive too much freedom.)

Hacking is one of those many things that always existed, but were only discerned as something specific with computer technologies. As computers work with formal grammars, and typically have to meet pretty clear expectations of its behaviour, it’s very easy to see how exactly hacking works. In many cases, the abusive message that the hacker sends to a system is perfectly well-formed from the point of view of the system’s grammar, but its “meaning”, or rather its effect, is something the creators of the system never expected. As practice shows, even the most simple and clear grammars have potential for abuse.

Fake-new. In hindsight, the malicious message that hacks a system’s grammar is often quite obvious. It is not the hack itself, that is often very circumstantial, that is interesting, but what happens after a hack. The system, to defend itself, must learn to deal with this message. It was, after all, at some level grammatical, otherwise it wouldn’t even be received - so simply forbidding “messages like this” is often out of question. The system has to reinterpret itself, invent new meaning for its grammar that would naturally exclude the problematic behaviour. And this new interpretation is exactly the one that allows hindsight, that makes the hack boring and obvious: of course, because the new meaning has a clear and perfect place for the malicious message. Not only does the new interpretation completely replace the previous one, often making it hard to understand the old problem, it is also more “true” as it only deflates the range of welcome truths. For message, being only “new” from the point-of-view of an interpretation that is becoming obsolete and wrong, while the message is finding its place in the improved grammar already - the malicious message is fake-new, it’s a new-issance.

We can think of “Cogito ergo sum” as a hack like that. According to the most simply understood grammar rules, it’s a sentence well-formed enough to be possible way before Descartes. Yet the deeper, less obvious grammar of what was perceived to be a valid implication couldn’t stand this, and was thus abolished. The new meaning of the whole “system” wasn’t limited to this one sentence, and made sure that, for example, “existence precedes essence” would be read differently before and after. “Cogito ergo sum?”, refuses to suspend his disbelief a contemporary freshman philosopher, “Fake news!”.

Theory. Hence a proposition to consider: “Generative grammar is theory”. We will only allow this annoying thesis to see what happens after one subtracts from a theory its grammar. Definitely a part of learning any new conceptual structure is learning how to use it grammatically. The philosophic books that recognise their grammatical ambition can’t stop saying almost the same things over and over, in slightly different ways, just for us to learn how to use their new words and sentences - a philosophical equivalent of children poetry. Yet bots show that it wouldn’t be fair to say that the value of a theory lies beyond this grammar.

What happens when one makes a bot that constantly and recognisably produces sentences well-representing a particular discourse, be it one of american liberals, of latin emperors, of lacanians, or of CCRU-pining Urbanomic readers? Something is extracted from this discourse, something as concrete as a repetitive machinic process can be, so to say: its grammatical core. It exposes this core in a way that allows an important question: “Is what I say could be said by the bot?”. The discourse then acquires its own turing-test, a material implementation of the old writer’s question: “what is new?”.

The word on the street is that machines are going to write novels now. For writers, the question now is clearly how to write something that the machines wouldn’t write just yet. The writers still think in terms of particular texts, but bots are a kind of process that inherently devalues their particularity. A bot doesn’t just produce a bunch of tweets or novels, it abstracts away the whole genres in a concrete form. The value of a bot is not a sum of the values of each of his products (as their number is potentially infinite, their value falls to zero anyway). The value of the bot is the value of the sub-genre it creates by interpreting an existing one; it is the theory of a genre that bots personifies.

The value of this theory is the surplus-value that bots take from the people. If when writing, people aim to talk of something (of course, the process is old enough for some writers to recognise it’s not necessarily the case), but end up creating new genres, defining their new grammars, then this second process is exactly the source of the surplus-value the sediment of which is the bot. The faster this bot-creating process is (and it’s been on since the dawn of the literature), the less there’s value in talking about something, and the more the genre-generative process is at stake.

In other words: bots will successfully go on describing the world using our concepts, they will talk of reality as clearly as we let them. We should leave reality to them. We have other things to do, and it’ll never be hard to see what they missed in what we’d like to say.

Grammar of a technological system. “Anatomy of an AI system” [ai18] is a project documenting as further as possible what’s going on behind Amazon’s Alexa. Yet is “anatomy” the best metaphor? Crawford and Joler join the long tradition of anthropomorphism (or biomorphism?) of technical systems; by saying “anatomy” they hint at a certain body that is constructed in a particular way, that has a structure and organs, most of them vital, interacting with each other somehow.

Yet is this the best way to think of a technical system? Technology, especially digital one, reveals itself in its capacity to interact and form the more and more complicated systems. An act of automation doesn’t create a new “technological body” with its own set of organs: rather, some place in the great cycle of capitalist production grows a new “limb”, a limb that often replaces or displaces other existing parts or workers. Technological systems can be connected to each other, and form structures that don’t achieve the inner consistency of a “body”, yet exhibit a particular kind of grammar behind them. The technological systems can be rephrased, rewritten, abstracted away, or joined together, just like grammar allows sentences to be.

This zine. As Crawford’s research makes most clear, human labor holds an important place in the grammatical structure of an AI system. The anonymous workers behind the low-paid online services for cheap labor produce a lot of important data, including speech, that is used by those systems. These people hold (at least) two positions of enunciation. Outside of this work, they speak as themselves, represent themselves, and say “I” to point at themselves. But the speech they produce as part of this kind of labor is on behalf of an AI system – and it is produced as an abstract, pure speech, the subject of which is universal and empty.

For a zine (to be published later) I asked a few of those workers to produce literary texts. Those are exactly the people who hold an important place in the grammatical structure of an AI system, the anonymous workers behind the low-paid online services for cheap labor. While doing so, I asked them to put themselves in the position of an AI, to pretend that they’re an AI. It is a position they already hold, as speaking for those systems; yet, normally they have to disavow it, and let other things to speak on the AI’s behalf. In a sense it is a therapeutic experiment, or an attempt at subjectivation: the act of a subject that starts to speak on behalf of what he also is.

[howard08]: “Frost/Nixon”, dir. by Ron Howard, Universal Pictures, 2008.

[plant18]: http://algorithmicbotany.org/papers/modeling-plant-development-with-l-systems.pdf

[hook13]: http://eprints.lse.ac.uk/60338/1/Hook_Nixon's-full-speech_2012.pdf

[gpt2]: https://openai.com/blog/better-language-models/

[axsys18]: https://ossz2vasz4.wordpress.com/2018/03/04/2232/

[ai18]: Kate Crawford and Vladan Joler, “Anatomy of an AI System: The Amazon Echo As An Anatomical Map of Human Labor, Data and Planetary Resources” (September 7, 2018) https://anatomyof.ai