Web 2.0: Genealogy of AI

How the slick looks of Web 2.0 brought upon the Artificial Intelligence

The idea that "Artificial Intelligence" is a magical thing that comes from science to defy every possible intuition is pervasive in our minds, no matter even how much the scientists disavow the term itself. There's definitely a certain historical account of how some of the typical ML techniques would be first developed in academia, to be then in some cases deployed to help the business achieve particular means. Still, this account doesn't provide an explanation of the particularly powerful and creepy place the AI holds vis-a-vis our labor, our creativity, our freedom and our future in (at least) the general imagination.

I want to propose an alternative theory, supported and inspired by the way the term AI is used in the industry: the Artificial Intelligence is not a technology, but a particular relationship between labor, power, consumers and commodity-production[1]. This structural relationship wasn't invented in academia, but came to be as a step in the historical evolution of technological business, and in this essay I want to highlight a particular moment in the history of the Internet that framed the very thing we now call "AI": the dawn of Web 2.0.

[1] Probably the most important article in the current wave of the re-theorisation of AI in terms of labor is: Kate Crawford and Vladan Joler, “Anatomy of an AI System” (September 7, 2018), https://anatomyof.ai .

Dotcom as a failure of evaluation

The "dotcom" was in the '90s a magic substance that would immediately add value to any particular business or commodity: "Pets.com" were millions of times more valuable than "pets". (Notice the superficial similarity to the AI right now: doesn't "Pets AI", whatever it might be, sound more valuable than "pets"?). Internet had already made a lot of things available in the new, more immediate ways, and evaluation of the change it brought wasn't straight-forward, as Internet promised (and eventually delivered) completely new forms of business, of consumption, as well as of production, and a case can be made that the simplistic evaluation of “dotcoms” might have been a good starting point. Still, an uncertain bet on the future can hardly hold its value when everyone bets the same way. An idea that something is somehow valuable is good to explore using seed capital; it quickly turns to waste when the same bet, lacking any real business-plan, is made in millions.

So in the early '00s, the dotcom crash happens - suddenly it's becoming clear that those naively-evaluated dotcom businesses weren't necessarily going to turn profits, and having a "dotcom" suffix to the company name is only a piece of the business puzzle. This crash was a real cultural turn: suddenly the things that seemed good and valuable started to seem worthless, and a pressing need to evaluate things differently was becoming more and more clear, especially as, with time, the investors money was becoming available again.

This failure to evaluate the Internet properly was quite understandable. The '90s Internet has definitely had a lot of valuable content - arguably, already quite enough content. The way one would find and access it, would be by memorizing an URL (often a domain ending with, yes, "dot com"), or by somehow finding such an URL, e.g. in some form of media, even print media, that would publish a regular curated list of cool new things on the Internet. Knowing a lot of those URLs was a big part in being an expert on the internet, as there wasn't any other, better way to navigate it, to find the good stuff. It's no wonder that the singular most important means to access the valuable content - the domain - became, in itself, an over-valued fetish.

The problem with all the valuable internet content, from a capitalist perspective, was, however, that it wasn't easy to find, wasn't uniform enough in form, wasn't easy to control and to sell... All these internet wonders: the crazy photographs, the inventive fanfics, the proto-blogger theorising, the proto-indie-game .exe's, the art from the scene - all of it was already there, but none of it was easily discoverable and monetizable. To put it in one word: Internet content wasn't yet a good commodity.

Web 2.0 as an ideology & a style

It is to remedy this problem that Web 2.0 emerged, first and foremost seen as a style. This style sought to look for where the value actually was, and that alone made it feel as such a breath of fresh air. Still, the way it defined the good style had a pretty clear economic justification, that I want to explore.

As a guidance, let's use the essay by Paul Graham, such an influential figure - both as a writer-ideologue and an investor - that his framing might even have had a power to fix the main points of Web 2.0 for everyone. He speaks of the three main "ingredients" of Web 2.0: "Ajax", "Democracy", and "Don't maltreat the users", and each of those requires a critical translation from the ideological language that I will try and provide.

Ajax, or the new idea of form

While Ajax is usually considered as a particular technology ("Asynchronous Javascript and XML"), the influence and the meaning of it was much broader and less specific (and very much beyond the "XML" part). Ajax redefined the way we think about Internet pages to such an extent that the young generation of developers don't even know what it is, and just take the possibilities it provides for granted, not as a particular independent technology but as the way things normally are.

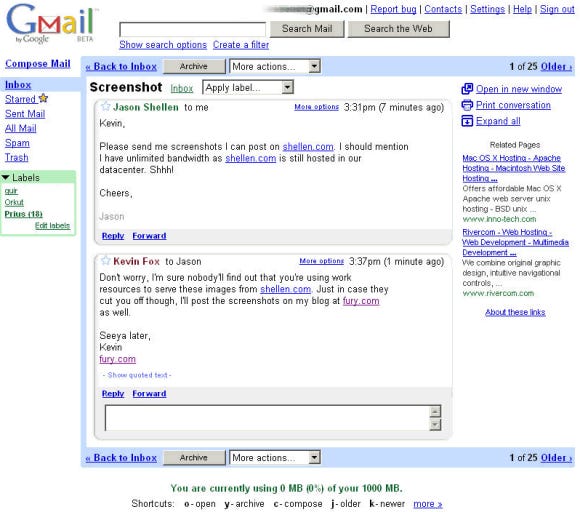

Early Gmail, the first Ajax-powered thing everybody used

What Ajax did is a complete redefinition of the idea of a Web page. The Web page, the "hypertext document", used to be a singular entity on the web, supposed to have its own particular content and be accessible directly via a particular URL. (The dotcom fetish starts right here, as you could see). With Ajax, the web page become just a point of access to a variety of content and actions, an interface that structures things in its own particular way, and not necessarily around pages and URLs. It allows all of those moving parts: comments, ratings, threads, voting, chats, widgets... Arguably, it is this shift of perspective that was the tricky part about Ajax, and demanded so many "tutorials" just to give everyone enough practice to stop thinking of a web page as of a single piece of content.

My hypothesis is that the deeper idea behind this shift was an attempt to disentangle form and content on the web in general. (From now on, it's always form as in form/content, not "<form>"). One part of the reason why the "Web 1.0" was so hard to commodify is that every author of a webpage was in full control of its form and content, allowing oneself any kind of weirdness and idiosyncrasy in their approach even from one page to another. All of those different, strange webpages were hard to parse, unify, subdue under a simple marketable form. Many technologies (often under the epithets of "semantics" and, later, "accessibility") would try to impose more specific forms on the creators of pages, demanding that a Web page is not just "any HTML under URL", but something more legible to supposedly users, but actually the emerging forms of internet's idiosyncratic governance (such as Google's search engine). The most notable failure among those technologies was probably XHTML, imposing a whole masochistic-ritualistic order on the frontend engineers who went on to enormous pains to ensure compliance with its strict rules.

The goal of disentangling form and content was to make sure that a business retains the control of the form, while the user provides content. It is in the control of the form (including the infrastructure that supports it) that the power of an Internet company lies. Indeed most of the biggest internet-platforms we know right now operate via a very tight control of the form - Instagram and Twitter being the clearest examples. XHTML and the similar rigid technologies don't go far enough in this direction, thus not creating any parties very interested in their adoption and evolution.

Ajax, however, is different as its main idea is to create a page that would feel like a very specific form to be filled by the user. In this case the power is split very clearly: the users create content, and the business makes it very easy for them (and incentivizes it in other ways). Introducing such a split, the inconspicuously-named technology started the major shift in the way Internet looks we bore witness to: from a million weird websites to "4 platforms full of screenshots of one another".

This idea that "content will follow form" can also be used to explain much of the Web 2.0 visual style, including the new approaches to favicons and logos. The emphasis on simple and clear copy, "clean interface", round and well-defined edges, consistent visual languages felt very natural, though relativising those principles makes their goals clearer. Quite some web-designers grown on Web 2.0 cite Bauhaus as their inspiration, not even noticing how the functionalist formula was inverted, and likely just finding a retroactive stylistic defense of their much simpler quest for the simplest commodity. IKEA is their real inspiration, a company that fixed the form before learning to use it for every possible function.

And to go even more speculatively into the subjectivity-forming direction, can't we talk of a new kind of nerd emerging in the early '00s, not the nerd of weird stature and obscure lore knowledge, but the nerd of "I like clear rules and I don't create my own content", with basic hipster clothes, reductively-liberal worldviews, and "I am only doing my own particular job"-style undereducated image of society? PG's maxim of "Don't maltreat the users" might actually be rephrased to "Don't make the users think", a title of a book very popular and influential at that particular time.

"UGC", or on the curatorial labor

All forms of art that would fit the form imposed by a corporation were given a name at the time: User-Generated Content. The participle Generated already hints at a particular attitude towards the user's labor, which wasn't really recognized as such. Another way of understanding PG's maxim to "Don't maltreat the users" is as demanding "Not to get in the way of them working for you", demanding to make it very easy and comfortable to create and publish all kinds of things on a platform, something that Ajax enabled quite well.

The impulse to create and publish things on the Internet for free had long been there, and providing users with a particular form for it and the corresponding benefits was an attractive deal for both parties. But the most important feature provided to the user was the curatorial service: the ability to find things to one's liking, to avoid things that nobody would like, and to make sure one's work reaches a broader audience.

The necessity of curatorial work was probably the most obvious discovery of Web 2.0 (and yet this necessity seems to constantly be in danger of oblivion). However, the main principle of controlling the form and leaving the content to the users wouldn't allow the old-school, editorial approaches to it. "Curatorial labor by specific authorities doesn't scale", the word on the street went. And so it had to be dismantled into the smallest pieces, such as "voting", "ratings", but also things like "wikis" and very importantly "tags", and portrayed as "Democracy", which was a great scheme to make sure it's accepted as an obvious good. (Imagine being explicitly asked to try and evaluate some weird-looking food as part of the process of getting some good one...). Some of the most important sites of Web 2.0, such as Digg or Reddit, are built completely around this new idea of how to curate the content. And it is this kind of curatorial labor that many contemporary AI systems end up relying on, such as GPT-2 being trained on the pages the Reddit users curated before.

Curatorial labor is, like programming, a kind of labor that is on a different level from other kinds of labor, in the sense that it abstracts over other labor, has a certain power over it. Curatorial labor actively changes the value of other products of labor, by evaluating them, by working to make sure they fit a particular form, by exposing it to more people. It is around those curatorial features that some of the most usual and powerful forms of "AI" in our life ended up being built, such as the smart timelines, the recommendations, the targeted ads, the various knowledge-extracting methods.

In the dichotomy of form and content, if you add a romantic (and very Web 2.0) idea that the users' creativity is a force that just keeps on giving, the curatorial labor would be the labor needed to make sure their creations fit the form well. By evaluating things numerically, providing taxonomy, weeding-out the bad items, and creating incentives for the producers, the users would not only perform enough labor to make sure the content is curated well for themselves, but they'd provide enough formal structure and legibility to it - something that is necessary to automatically analyse the content.

The throne of the data-driven businesses

An arbitrary human-made artifact is good for neither selling nor automatic analysis. The concept of commodity was long used by economists to describe a thing that is ready to be sold on the market, lacking features that could impede with its sales. While fungibility, or being abstract enough to be replaceable by a similar thing, is cited as a main feature of commodity, we can see that currently we expect even more, such as being able to fit particular forms (be it container, size, style, format, type of socket or whatever), or clear legibility into the quality - counts of likes, performance of bought ads, and other metrics like that. The Web 2.0 was an ideology that facilitated creation of such commodities on the Internet.

Apart from the sales, various forms of automation also benefit from the same, or very similar sets of characteristics. Ability to fit a container or to compare number of likes might be useful to a consumer, but is even more important to a machine, which can't extract the features from content without fixing a particular form. We could say that a good commodity is not only good for selling, but also to be an object of machine learning. Web 2.0 did not just make the web salable, but also quantifiable, legible to a machine. The machine that reads those commodity-forms well accelerates the search for a perfect commodity that was already under way. To give an example: the Instagram is a planetary engine to find/create a perfect visual commodity, called influencer; and all its users and developers are laboring on that.

The curatorial algorithms in the early Web 2.0 sites, such as Google PageRank, Gmail's spam filter, Reddit's timeline, might had been technically too simple to be considered Artificial Intelligences. Yet, the data they formed, and the feedback loops between users and business they created, are the data and feedback loops that the contemporary AI systems rely upon. The scientific discoveries allowed for the more and more complex forms to be analysed, to create more and more perfect and complex commodities on the internet, and yet the place they took, and the power they assumed, was already created and defined by the market seeking to extract value from the World Wide Web.

I am Valentin Golev, an independent researcher in the philosophy of technology (affiliated with The New Centre for Research and Practice), and I will publish my thoughts via this newsletter. Do subscribe!